Turn every policy into automated workflows with built-in enforcement and audit-ready proof.

What Is AI Compliance Agent

What is AI compliance agent? An AI compliance agent is software that monitors work, compares activity against policies or control rules, routes exceptions, and captures evidence so compliance is enforced while work happens.

It is different from a chatbot that answers policy questions. A useful AI compliance agent sits inside workflows, watches for risk, takes approved actions, and gives humans a clear trail for review.

This guide explains what an AI compliance agent does, how it works, where it creates value, what humans still need to control, and how teams can make the agent operational without turning compliance into a black box.

In this article, we are going to cover:

- What is AI compliance agent?

- What an AI compliance agent actually does

- What is AI compliance agent in daily operations?

- Where AI compliance agents create value

- What humans still need to control

- How Process Street makes AI compliance agents operational

- FAQs

What is AI compliance agent?

An AI compliance agent is an agentic system built to help enforce compliance rules across real business processes. It can monitor events, read workflow context, check policies, identify exceptions, recommend fixes, trigger follow-up tasks, and preserve audit evidence.

The simplest way to understand it is this: traditional compliance software records what people report, while an AI compliance agent helps inspect the work itself. It does not replace the compliance team. It gives that team a faster control surface.

AI compliance agent versus compliance chatbot

A compliance chatbot answers questions such as, “What does this policy say?” An AI compliance agent answers a more operational question: “Is this process following the policy right now, and what should happen if it is not?”

That distinction matters because compliance failure usually happens in execution. The policy exists, the checklist exists, and the training exists, but someone skips a step, misses a threshold, uses stale evidence, or routes approval to the wrong person.

AI compliance agent versus rules engine

A rules engine applies predefined logic. That is still useful, especially for hard controls such as approval thresholds, required fields, retention rules, and segregation of duties. An AI compliance agent adds context handling around those controls.

For example, a rules engine can require approval when a vendor risk score is high. An AI compliance agent can summarize why the score looks high, identify missing evidence, draft the review task, and route the exception to the right owner.

What an AI compliance agent actually does

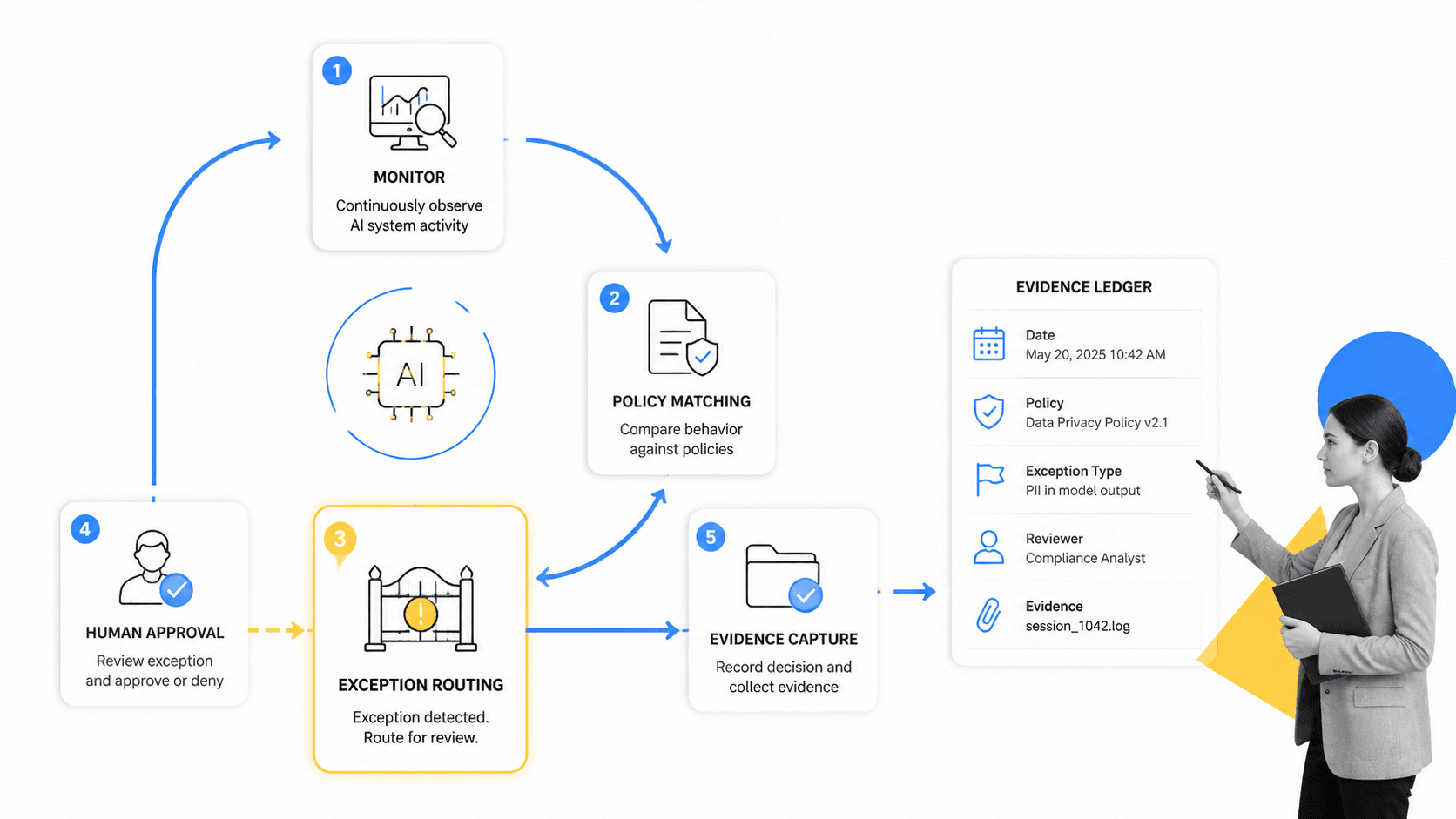

An AI compliance agent actually does five jobs: observe workflow activity, interpret policy requirements, detect exceptions, route decisions, and preserve proof. If it only summarizes documents, it is not doing the core compliance job.

Monitor work in context

The agent needs context from the work itself: tasks, owners, approvals, files, form fields, status changes, due dates, system events, and previous exceptions. This is why compliance agents perform best when they run inside a structured workflow system rather than a loose document repository.

Related Process Street pages on compliance operations, compliance workflow, and workflow automation software explain the operating layer around that work.

Match activity to controls

The agent compares workflow activity to policy, procedure, framework, or regulatory expectations. Some checks are deterministic, such as a required approval before release. Others are contextual, such as whether evidence looks incomplete or whether a procedure appears misaligned with a new policy update.

AI governance references such as the NIST AI Risk Management Framework, ISO/IEC 42001, and the EU AI Act overview all point toward a similar idea: AI needs defined risk controls, oversight, and evidence.

Route exceptions to people

A strong AI compliance agent does not hide uncertain decisions. It escalates exceptions with the relevant context, suggested next step, and supporting evidence. Human review stays visible instead of disappearing into chat threads.

This is where agentic compliance becomes practical. The agent can reduce manual review volume, but the compliance team still owns judgment, policy interpretation, and final accountability for sensitive decisions.

What is AI compliance agent in daily operations?

An AI compliance agent works by connecting a policy source, a workflow source, a decision layer, an action layer, and an evidence layer. If one layer is missing, the agent may look impressive while still failing audit reality.

Policy source

The policy source gives the agent its standard. This may include SOPs, internal policies, control frameworks, contractual obligations, security requirements, quality procedures, or regulatory guidance. The source must be versioned and owned, otherwise the agent can enforce stale rules at scale.

Workflow source

The workflow source tells the agent what is happening. It includes tasks, assignments, status changes, approvals, uploaded files, due dates, and exception notes. Without workflow context, the agent is guessing from fragments.

Decision and action layer

The decision layer evaluates whether activity matches the control. The action layer then creates a task, sends an alert, blocks a step, requests evidence, drafts a remediation plan, or routes approval.

Process Street resources such as Approvals and Run Links show how workflow actions can be structured so decisions happen in the process, not after the fact.

Evidence layer

The evidence layer records what the agent saw, what policy it applied, what action it took, what human approved, and what changed afterward. This is the difference between automation that feels helpful and automation that can survive audit scrutiny.

The SEC comments on AI and markets and FTC guidance on AI claims are reminders that AI claims and AI-driven decisions need care. Compliance teams should be able to explain what the system did and why.

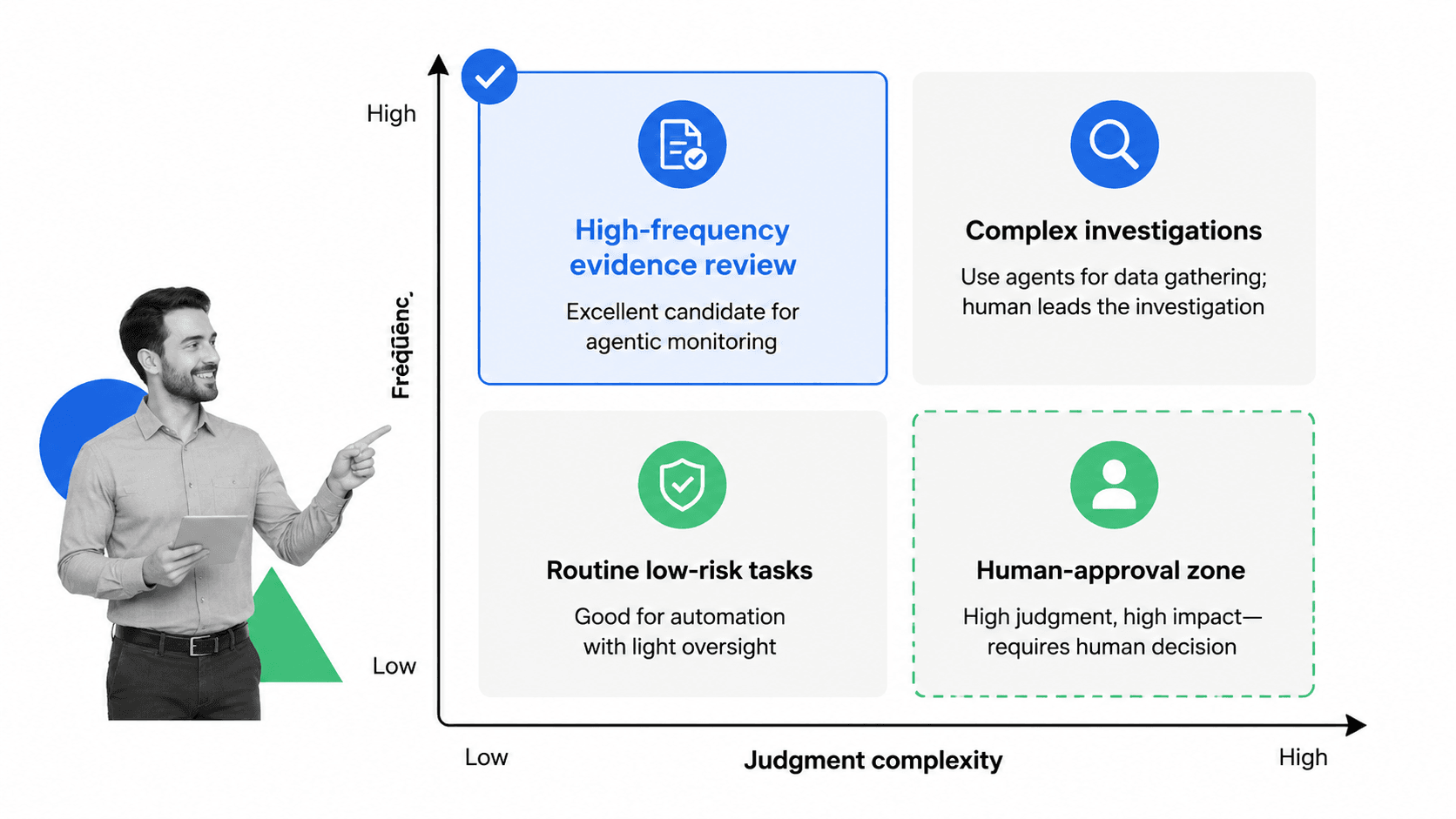

Where AI compliance agents create value

AI compliance agents create the most value in repeatable, evidence-heavy work where the risk is knowable and the workflow is structured. They are weakest when work is ambiguous, facts are incomplete, or legal judgment depends on nuance the system cannot verify.

Good fits

- Evidence collection for recurring audits.

- Policy acknowledgment and training follow-up.

- Vendor risk intake checks.

- Control testing reminders and exception routing.

- SOP review and approval workflows.

- Change management checks before release.

Teams researching adjacent systems may also compare compliance management software, audit management software, and policy management software.

Poor fits

Poor fits include one-off legal interpretation, high-stakes disciplinary decisions, undocumented tribal judgment, and workflows where the system cannot access reliable data. In those cases, the agent may still assist with evidence gathering or drafting, but it should not own the decision.

The useful boundary is simple: let the agent handle repeatable monitoring and evidence work, then require human review when the decision changes rights, money, employment, legal exposure, customer impact, or regulatory posture.

What humans still need to control

Humans still need to control policy design, risk appetite, final judgment, system access, exception approval, and model governance. An AI compliance agent should make those controls easier to operate, not obscure them.

Human approval for sensitive actions

Sensitive actions need explicit approval paths. The agent can prepare the decision packet, collect evidence, and recommend next steps, but the approval should show who made the decision and what they saw at the time.

Clear operating limits

Define what the agent can do automatically, what it can recommend, and what it must escalate. Limits should cover systems it can access, data it can process, actions it can trigger, spend or risk thresholds, and situations that require human signoff.

Ongoing review

Compliance agents should be reviewed like other controls. Sample decisions, inspect evidence quality, test edge cases, review false positives and false negatives, and update policies when the business changes.

Process Street pages on AI-driven compliance and digital compliance officer go deeper on why compliance roles are shifting from manual checking to system-level enforcement.

How Process Street makes AI compliance agents operational

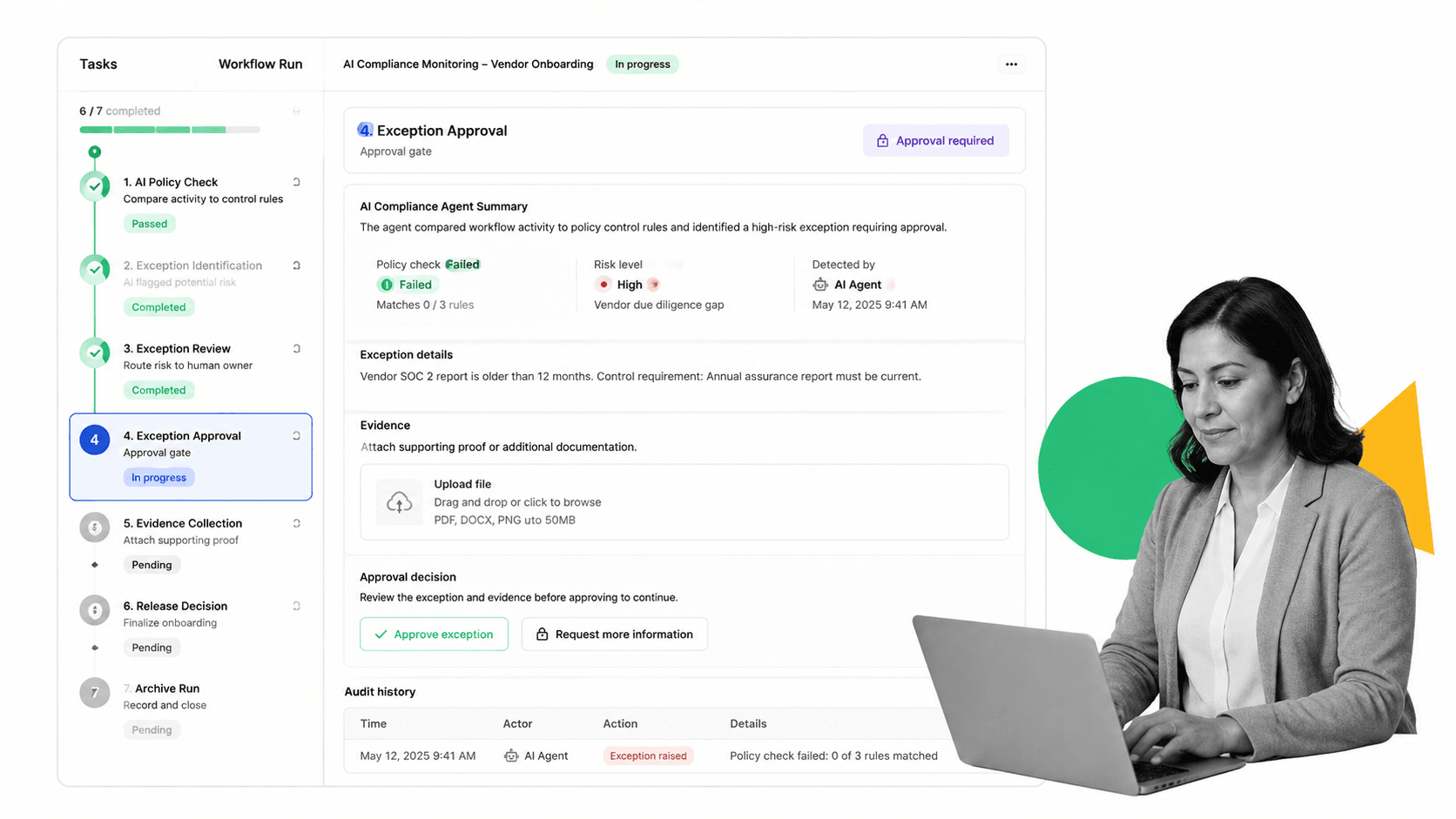

Process Street is a Compliance Operations Platform that makes AI compliance agents operational by connecting policies, workflows, approvals, and evidence in one execution layer.

Cora, the Cora AI compliance agent, monitors workflows, flags risk, suggests updates, and helps teams keep compliance tied to the work itself. The goal is not another passive dashboard. The goal is compliance by default.

Turn policies into executable workflows

An AI compliance agent is only as good as the workflow it can inspect. Process Street lets teams turn SOPs, controls, and review procedures into executable workflows with tasks, owners, forms, approvals, due dates, and evidence fields. Templates such as the internal audit checklist and risk management process template help teams start with concrete operating patterns.

Keep the agent inside the control surface

The agent should work inside defined controls: assign tasks, request evidence, raise exceptions, suggest updates, and route approvals. That gives compliance leaders a system they can inspect and improve.

Process Street has direct, universal integrations to 5,000+ systems. Need a new one? An AI agent builds it on the fly. That matters because compliance work spans HR, finance, legal, ticketing, e-signature, CRM, data warehouses, and systems of record.

Create proof as work happens

Audit-ready compliance is not a report built after the fact. It is the record created while work is done: which policy applied, who completed the task, what evidence was attached, what exception was raised, and who approved the outcome.

That is the practical answer to what is ai compliance agent: it is a control system for AI-assisted compliance work, not a magic reviewer. The best version helps teams enforce policy, track steps, and prove compliance without slowing every process down.

FAQs

What is an AI compliance agent?

An AI compliance agent is software that monitors workflow activity, checks it against policies or controls, routes exceptions, and captures audit evidence. It helps compliance teams enforce rules while work happens instead of waiting for manual review after the fact.

How does an AI compliance agent work?

An AI compliance agent connects policy sources, workflow data, decision rules, action steps, and evidence records. It observes the process, identifies gaps or exceptions, triggers the right next step, and preserves a trail for human review.

What is the difference between an AI compliance agent and compliance software?

Traditional compliance software often stores policies, tracks tasks, or reports status. An AI compliance agent is more active: it can monitor work, interpret context, suggest remediation, route approvals, and help keep evidence current.

Where should teams use AI compliance agents?

Teams should use AI compliance agents in repeatable, evidence-heavy workflows such as audit prep, policy acknowledgments, vendor risk intake, control testing, SOP reviews, and exception routing. High-stakes judgment should still include human approval.

What risks should humans still control?

Humans should control policy design, final judgment, access permissions, sensitive approvals, risk appetite, and ongoing review of agent behavior. The agent should make those controls easier to run, not hide them.

How does Process Street support AI compliance agents?

Process Street supports AI compliance agents by connecting policies to executable workflows with assignments, approvals, evidence, audit history, and integrations. Cora monitors workflows and helps teams flag risk, suggest updates, and keep compliance tied to execution.