There’s a big difference between successful software companies and those shoddy unverified apps you get off the app store:

While small-time apps aren’t heavily used, and the creator won’t receive many complaints or bad press if anything breaks, Google and Facebook are used by billions of people worldwide.

If a bug affects 0.01% of the user base in a small app, it’s not worth the energy. If it affects 0.01% for Google and Facebook, that’s thousands of complaints, and possible media scandal to deal with. And we all know what the price of that can be.

So, when it comes to studying quality assurance there’s no better examples than two of the biggest Internet companies in the world.

I’ve deliberately not chosen to give Microsoft the time of day in this post, because I’d say their QA is pretty damn weak for their size.

…However, read on to pick up tips from Facebook and Google on how to make software that doesn’t break down, cost you more money, and cause frustration for your customers.

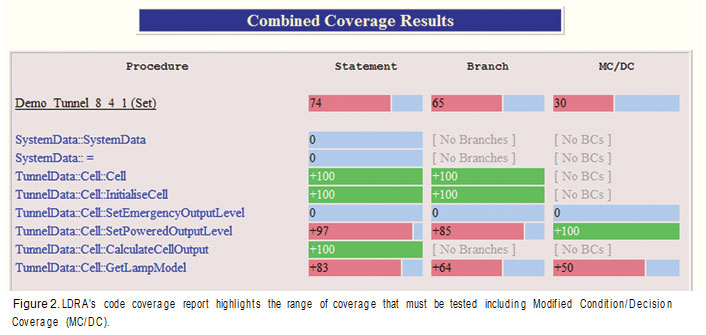

Code coverage: a quality control metric

Code coverage is expressed as a percentage, so if you have tests written for 50% of the code base, you have 50% code coverage.

This includes:

- Statement coverage: is this one line broken?

- Branch coverage: can the application jump without breaking (e.g. if, switch)

- Path coverage: all paths through the program can be taken without error

And so, 100% branch coverage implies 100% statement coverage, as 100% path coverage implies 100% branch and statement coverage.

The deceptive thing about code coverage is that as soon as you start combining chunks of code and testing all possible paths and outcomes, you quickly rack up a near infinite number of tests to run, even on medium sized projects.

The complexity of code in large apps explains two things:

- Why automated tests are a necessity

- Why you won’t find any significantly sized apps with 100% code coverage

Code Coverage at Google

Google could be nowhere near as big as it is today without extremely high quality assurance standards.

Luckily, Google blogs about their testing and QA a lot, so we can get insight from their methods.

In an article about measuring code coverage at Google, Marko Ivanković from their Zürich division points out why acting on the metric of code coverage is polarizing between different developers and companies:

“Some people think it is an extremely useful metric and that a certain percentage of coverage should be enforced on all code. Some think it is a useful tool to identify areas that need more testing but don’t necessarily trust that covered code is truly well tested. Others yet think that measuring coverage is actively harmful because it provides a false sense of security.”

To provide an answer to the importance of code coverage and how it should be acted on, Marko tested implementing two types of coverage at Google: daily, and per-commit.

Daily coverage tests the work-in-progress code of the day, and helps engineers fix errors before they get too far along in a project.

Per-commit focuses down on only the tests that need to be run to allow for the commit to go smoothly.

Here’s a screenshot of the internal tool they use to flag up erroneous lines:

And so — over 100,000 commits later — with this simple implementation, Google increased code coverage by 10% over all projects.

It’s also important to note that this part of their QC process is purely automated testing. (More about the rest of the process later.)

Code coverage at Facebook

Facebook use data obtained through a code coverage tool to inform their automated testing.

According to an ex-Facebook engineer, code coverage is kept to a maximum with a few different methods that vary depending on the language of the code:

PHPUnit is used, unsurprisingly, to unit test the PHP. This is a huge part of Facebook’s architecture. The tests are ran by developers, dedicated testers, and by the software itself.

For testing how content is displayed to the end user, Facebook’s engineers use Watir, both manually (a human going in and clicking buttons) and semi-automatically (a machine simulating a user that clicks all the buttons much faster).

Failed tests automatically notify developers and are logged in a database developers have access to.

As you can guess, there are huge teams of developers at Facebook, and working on 61,000,000 lines of code (with a near-infinite ways these lines can interact) will start to cause serious problems without a sound culture of testing and strict guidelines for code coverage…

However, one of the more interesting things about Facebook’s testing process and attitude towards QA is its acceptance of its own flaws.

Evan Priestly, ex-Facebook engineer, says there aren’t any pure QC roles at the company, and employees are solely responsible for writing the tests for their own code.

This is because social media is non-essential. Bugs won’t cause a space rocket to come burning up into the atmosphere.

“By paying less attention to quality, Facebook has been able to focus on other things, like making the company a fun place to work at that can attract and retain talented engineers. Facebook would probably be less fun if it cared more about quality. […] Social networking isn’t really critical to people. It’s important, but it’s not banking or space shuttles or nuclear reactors. It’s not bridges or cars. It’s not even email (at least, in most cases) or phone calls. This also gives Facebook more margin to work with.”

How Google tests software

In an article from 2011, InfoQ reports Google’s testing department is relatively small for the amount of developers but works well because, like Facebook, every engineer is responsible for their own code’s tests.

Google’s achieved this by creating a culture of testing, and not an abstract, disconnected testing department. With 2 billion lines of code to work with, this alone is a huge accomplishment.

“Quality is a development issue, not a testing issue. To the extent that we are able to embed testing practice inside development, we have created a process that is hyper incremental where mistakes can be rolled back if any one increment turns out to be too buggy. We’ve not only prevented a lot of customer issues, we have greatly reduced the number of testers necessary to ensure the absence of recall-class bugs.”

The way Google explains it is that product teams own quality, not testers. The testers write automation that allows developers to test. Nothing more, nothing less.

The benefits of this are:

- Developers and testers are on equal footing

- A many-to-one dev-to-test ratio

- Developers can just code and don’t have to spend too much time testing

As you might expect from a company known to build anything and everything, Google uses its own tool for tracking tests — the Google Test Case Manager, as well as automated tests.

How Facebook tests software

Facebook focuses on code ownership to make sure each developer is personally responsible for the quality of their own work.

This doesn’t mean it’s not peer-reviewed, however:

“Every line of code that’s written is reviewed by a different engineer than the original author. This serves multiple purposes: the original engineer is motivated to ensure that the code is of high quality, the reviewer comes with a fresh mind and might find defects or suggest alternatives, and, in general, knowledge about coding practices and the code itself spreads throughout the company.”

Since Facebook has a huge number of beta testers (e.g. unsuspecting users to who new features are dark launched), this means that it can deploy new builds to tiny fractions of its user base and test functionality of new features without causing much trouble for the rest.

Combine this with its lack of official testers and policies of code ownership, and you’ve got a development environment with major benefits:

- Engineers can quickly get out new feature and make iterations

- Issues can be fixed before 99.999% of the user base notices

- There’s no need for dedicated testers, and a better company culture over code quality

Tools Facebook uses for testing include PHPUnit, Watir, Boost, JUnit, and HipHop (internally developed software).

The culture of testing

Traditionally, software teams have development to write code, quality control to test it, and quality assurance to make sure the whole process is efficient and watertight.

With Google and Facebook, however, it’s obvious that testing isn’t build into the organizational structure in that traditional way.

Both companies emphasize personal responsibility (with Facebook shaming engineers that commit bad code), and see testing as part of the culture, not just a department somewhere in the building that has to clean up after the developers.

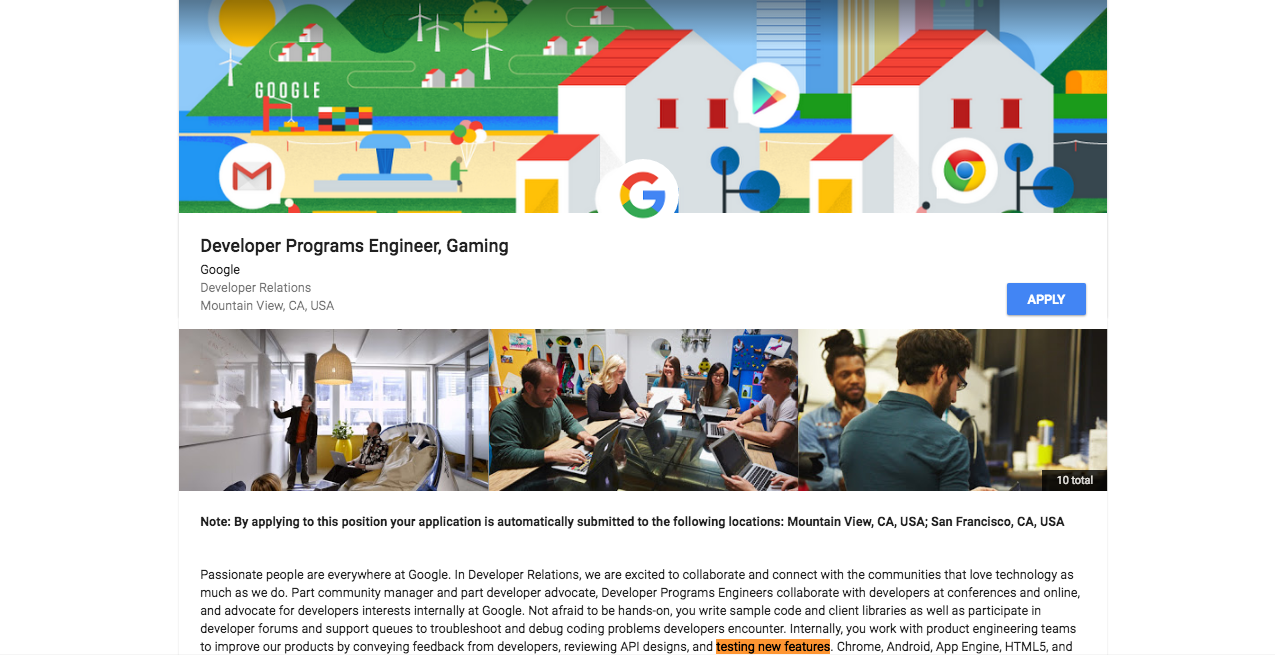

In Google’s job description for a game-related API developer, it specifies the candidate will “work with product engineering teams to improve our products by conveying feedback from developers, reviewing API designs, and testing new features”, amongst other things.

Google hires engineers who aren’t afraid of testing, and builds the process from there.

The same goes for Facebook. It makes clear to developers in job descriptions that testing will be a part of the job. A release engineer, for example, is responsible for “managing the source code management system, automating builds and regression testing, building tools and monitoring used in software deployments”

At Process Street, every pull request must be submitted with a test. Code is peer-reviewed, must pass automated tests, then is reviewed by Cameron, our CTO, and demoed in a meeting.

After that, we beta test the feature amongst our own employees and dark launch it for a few weeks before monitoring support for bugs. This means we can get code out much quicker than if we were to agonize over testing. Google and Facebook use the same methods, carried over from when they were startups like us.

Microsoft, however, takes an entirely different approach and employs almost as many testers as it does developers. Whether or not that’s doing wonders for their software is another issue entirely…

How do you make sure your software’s free of errors? Let me know in the comments!

Workflows

Workflows Forms

Forms Data Sets

Data Sets Pages

Pages Process AI

Process AI Automations

Automations Analytics

Analytics Apps

Apps Integrations

Integrations

Property management

Property management

Human resources

Human resources

Customer management

Customer management

Information technology

Information technology

Benjamin Brandall

Benjamin Brandall is a content marketer at Process Street.